Different AI models, Install own clone of ChatGPT or Deepseek to own computer with Ollama, Install ChromaDB to store AI vectors.

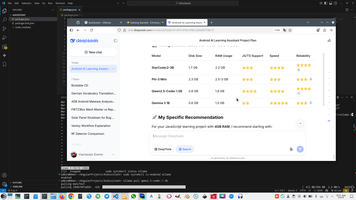

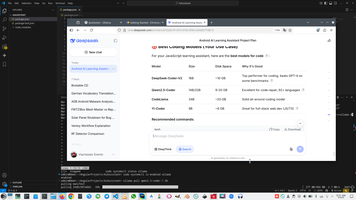

There are number of AI LLM-models LLM models comparison, if we select one concrete AI models, each of models has different purpose and functionality depends of size, for example Qwen-model functionality comparison by size.. In my difference computer for difference purposes I use different model, but now for my purpose I decide to select qwen2.5-coder.

There various LLM platforms, that allow download and install LLM model locally and support various services around models, like Http REST API - Comparison LLM-platforms. All of these services allow start AI service locally, without accessing to internet. After carefully investigate this question (also with AI) I decide to select Ollama service.

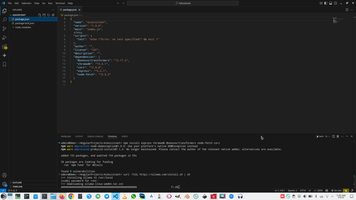

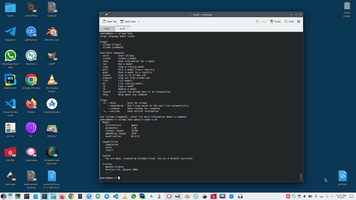

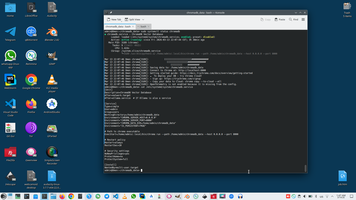

Ollama service allow to install most of existing LLM models https://ollama.com/library, this service contains number of models with HTTP wrapper and allow to install model with one simple command ollama pull.

Main problem is memory on computer where Ollama will deployed locally and I decide to select 1.5b version of qwen2.5-coder model, so qwen2.5-coder:1.5b

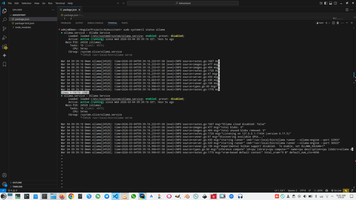

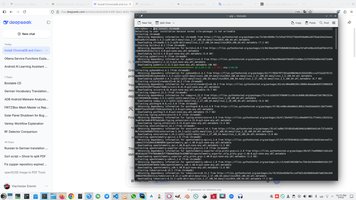

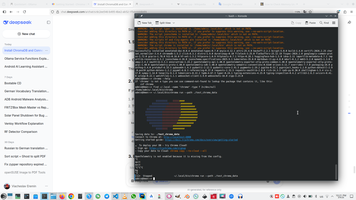

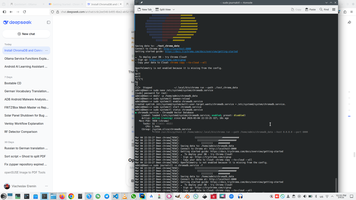

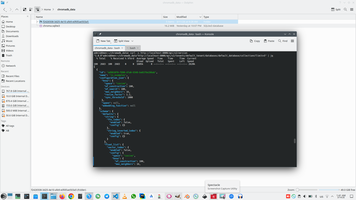

As you can see in screen above Ollama return prompt as clear text and vectors (in node "context"). To build any RAG (Retrieval-Augmented-Generation) system you need to store to DB not a simple text prompt, but all vectors, that Ollama return in context node.

Interesting, that Ollama use the same container's format as Docker OCI (open container format) and format of Ollama command (Ollama pull) the same as Docker pull. I also push a lot of dockers to DockerHub (for example looking to #Docker, but still don't push anything to Ollama, despite I make my own LLM-model My Own AI LLM Model for learning Deutsch for feginners.

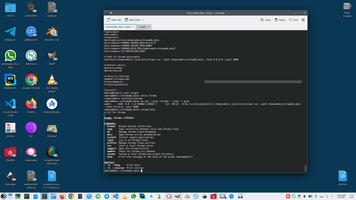

Fortunately, there are a lot of databases, that can store vectors Vector oriented DB, but I selected ChromaDB - https://github.com/chroma-core/chroma.

Pay attention, that other developers used Redis, because it working faster than ChromaDB Redis vs ChromaDB compare , I also used Redis a lot of times, for example Working with Redis in Node.JS projects, but in current AI projects I used ChromaDB.

Continue reading GGUF investigation (LLM model file format).

Ai context:

)

)

|

|